Building a Research-Grade Equity Backtesting Platform

A deep dive into the architecture and validation framework behind a custom equity backtesting platform — from hit-rate analysis and Kaplan-Meier survival curves to elastic-net macro factor modeling and walk-forward cross-validation.

This article walks through the architecture and key design decisions behind our equity backtesting platform — a system we built to test trading strategies against historical data and, more importantly, to statistically validate whether the results represent genuine skill or noise. The platform doesn't just tell you how a strategy performed. It tells you whether you should believe the results.

Key ideas in brief

- Most backtesting tools produce an equity curve and a Sharpe ratio. This platform adds four independent validation layers: hit-rate analysis, skill testing (AUC/ROC + Brier scores), randomised entry testing (1000 iterations), and Kaplan-Meier survival analysis.

- A macro factor modeling module uses elastic-net logistic regression with walk-forward time-series cross-validation to test whether macro variables (VIX, yields, DXY) have predictive power for stock returns — with ablation studies to isolate which factors actually matter.

- The strategy interface is deliberately minimal: one function, one DataFrame in, one list of trades out. Everything else — equity simulation, metrics, statistical tests — is handled by the platform.

Why Build This

Every equity backtesting tool produces the same output: an equity curve, a Sharpe ratio, a win rate. The problem isn't generating these numbers — it's knowing whether to trust them.

A strategy with a 2.0 Sharpe over 10 years of data could be genuinely capturing a market inefficiency. Or it could be overfitted to the specific sequence of events in that period. Or its edge could come entirely from three outlier trades that happened to catch pandemic-era moves. Or its entry timing might be irrelevant — random entries with the same holding period might produce similar results.

Existing tools don't answer these questions. They show you the curve and leave the interpretation to you. We needed a platform that systematically tests whether a strategy's results are distinguishable from luck across multiple independent methodologies:

- Hit-rate analysis: Do entries actually reach profit targets more often than loss targets within a fixed lookforward window?

- Skill testing: Does the strategy's entry condition scoring predict trade outcomes better than random (measured by AUC and Brier score)?

- Randomised entry testing: If we shift every entry date randomly within ±10 days and re-run 1000 times, does the strategy still outperform?

- Survival analysis: How quickly do trades reach profit vs. loss targets? Is the time-to-profit significantly shorter than time-to-loss?

These four tests, applied together, make it substantially harder for a spurious result to survive validation. A strategy that passes all four has a meaningfully higher probability of representing genuine edge.

System Architecture

Backtest Execution Flow

Strategy Framework

The Interface Contract

Every strategy is a Python function implementing a single entry point:

def run_strategy(data: pd.DataFrame) -> List[Dict]:

"""

Receives: DataFrame with Date, Open, High, Low, Close, Volume columns

and a 365-day lookback buffer for indicator warm-up.

Returns: List of trade dicts, each containing:

- 'buy_date', 'buy_price'

- 'sell_date', 'sell_price'

- 'return' (decimal, e.g. 0.05 for 5%)

- 'days_held'

- 'exit_reason' (optional)

- Entry condition values for skill testing (optional):

'entry_rsi', 'entry_macd_hist', 'entry_kc_bb_ratio'

"""

The optional entry condition fields are what make the deep testing module powerful. When a strategy records why it entered — the RSI value, the MACD histogram level, the KC/BB ratio — the skill testing module can evaluate whether higher-confidence entries produce better outcomes. Strategies that don't include these fields still work for basic backtesting but skip the entry quality analysis.

Equity Curve Simulation

The backtesting engine simulates a $100,000 portfolio through multiplicative compounding:

equity = 100_000

for trade in trades:

equity *= (trade['sell_price'] / trade['buy_price'])

# Daily equity tracking (while holding):

daily_equity = equity * (current_close / entry_price)

Each backtest also runs two benchmark comparisons automatically:

- Buy & Hold: What if you just held the underlying for the entire period?

- SPY: What if you held the market index instead?

These benchmarks contextualise the strategy's performance. A 15% annual return sounds good until you learn SPY did 20% over the same period.

Deep Testing: Statistical Validation

This is the core differentiator. Deep testing takes a strategy's trade list and subjects it to four independent statistical tests. The goal is to answer one question: is this strategy distinguishable from a random entry strategy?

Hit-Rate Analysis

For every trade entry, the engine looks forward N days (default 30) and asks two questions:

- Did the price reach the profit target (default +5%) at any point during the window?

- Did the price reach the loss target (default -5%) at any point during the window?

profit_target_price = entry_price * (1 + profit_target_pct / 100)

loss_target_price = entry_price * (1 + loss_target_pct / 100) # negative pct

hit_profit = max(highs[entry : entry + 30]) >= profit_target_price

hit_loss = min(lows[entry : entry + 30]) <= loss_target_price

This is fundamentally different from just looking at whether the trade was profitable. A trade might have been closed at +2% by the strategy's exit logic, but the hit-rate analysis reveals that the underlying actually reached +5% within the lookforward window — suggesting the strategy is leaving money on the table with premature exits.

The engine also calculates price deltas at fixed intervals — 5-day, 10-day, and 20-day changes from each entry — and the realised volatility over each trade's holding period. These distributions reveal whether the strategy's entries tend to precede moves of unusual magnitude.

Skill Tests

Skill testing evaluates whether the strategy's entry signals contain genuine predictive information. Two complementary metrics are used:

AUC/ROC (Area Under the Curve): Measures how well the strategy's entry condition score discriminates between profitable and unprofitable trades. An AUC of 0.5 means the entry score is no better than random; 0.7+ suggests meaningful signal quality; 0.9+ is exceptional.

Brier Score: Measures probability calibration — how well the predicted confidence of a trade matches its actual outcome frequency. A Brier score of 0.25 means the predictions are no better than always predicting 50/50. Lower is better.

The entry condition scoring uses strategy-specific ranking functions:

# Built-in ranking factors for entry quality scoring:

ranking_factors = {

'macd_rsi': MACD_histogram + MACD_trend + RSI,

'kcbb_ratio': KC_width / BB_width,

'ema_sma_ratio': EMA_30 / SMA_120,

'rsi_value': 100 - RSI, # prefer oversold

'rv_ratio': RV_30d_SMA / RV_current, # prefer vol compression

'ema_slope_kcbb': abs(EMA_slope) + KCBB_ratio,

}

These factors let the platform rank trades by entry quality and test whether higher-ranked entries produce better returns. If they do, the strategy is capturing real signal. If returns are uncorrelated with entry quality, the strategy may be profiting from market drift rather than signal.

Bootstrap analysis with configurable iterations provides confidence intervals on all skill metrics.

Randomised Entry Testing

The most brutal test. Every entry date is randomly shifted within a configurable window (default ±10 days), and the strategy is re-run. This is repeated 1000 times, producing a distribution of outcomes under randomised entry timing.

for iteration in range(1000):

randomised_trades = []

for trade in original_trades:

shift = random.randint(-window, +window)

new_entry = find_nearest_trading_day(trade['buy_date'] + shift)

new_trade = simulate_trade_from(new_entry, trade['holding_period'])

randomised_trades.append(new_trade)

randomised_returns.append(mean_return(randomised_trades))

# p-value: fraction of randomised iterations that beat original

p_value = sum(r >= original_return for r in randomised_returns) / 1000

The output is a p-value. If p < 0.05, the strategy's entry timing produces significantly better results than random entries with the same holding period — the timing contains real information. If p > 0.05, the strategy's returns are statistically indistinguishable from random entry timing, which means the edge (if any) comes from the holding period and market drift, not from the entry signal.

Survival Analysis

Kaplan-Meier survival curves visualise how quickly trades reach their profit vs. loss targets. This answers a question that aggregate statistics miss: even if the hit rates for profit and loss are identical, is the median time-to-profit shorter than the median time-to-loss?

A strategy where trades reach +5% in a median of 8 days but take 18 days to reach -5% has a meaningful time-asymmetry — it captures gains faster than it accumulates losses. This asymmetry isn't visible in win rate or Sharpe ratio but is a real edge in live trading because it reduces capital lockup and increases trade frequency.

The platform uses the lifelines library for Kaplan-Meier estimation, producing cumulative probability curves for both profit and loss target events.

Test Parameters

All deep testing parameters are user-configurable:

| Parameter | Default | Purpose |

|---|---|---|

| Profit target | +5% | Price level considered a "hit" for profit |

| Loss target | -5% | Price level considered a "hit" for loss |

| Lookforward window | 30 days | How far ahead to check for target hits |

| Randomisation window | ±10 days | Range for entry date shifting |

| Randomisation iterations | 1000 | Number of random entry simulations |

| Confidence level | 95% | Statistical significance threshold |

| Minimum trades | 10 | Minimum sample size for valid testing |

Macro Factor Modeling

The macro testing module answers a different question from deep testing. Deep testing asks is this strategy's edge real? Macro testing asks can macro variables predict whether a stock will reach its profit target?

Feature Engineering

The module takes macro factor time series — VIX, VVIX, TNX (10-year yield), DXY (dollar index), MOVE (bond volatility), FX rates — and expands each into four features:

for factor in macro_factors:

features[f'{factor}_level'] = factor_data[factor]

features[f'{factor}_delta_1d'] = factor_data[factor].diff(1)

features[f'{factor}_delta_5d'] = factor_data[factor].diff(5)

features[f'{factor}_zscore_20d'] = (

(factor_data[factor] - factor_data[factor].rolling(20).mean())

/ factor_data[factor].rolling(20).std()

)

# All features shifted +1 day to prevent look-ahead bias

features = features.shift(1)

The target variable is binary: 1 if the stock reaches +5% within 30 days, 0 otherwise. This transforms a continuous return prediction problem into a classification problem — which is better suited to the question traders actually care about (will this trade hit my target?) rather than the question academics typically ask (what will the return be?).

The +1 day shift is critical. Without it, today's VIX level would be used to predict today's forward return — but in live trading you'd be making the decision at close, and the return starts from tomorrow. The shift ensures all features are genuinely available at decision time.

Elastic-Net Logistic Regression

The model uses elastic-net regularisation — a combination of L1 (lasso) and L2 (ridge) penalties:

Loss = -y·log(p) - (1-y)·log(1-p) + C·(0.5·|w| + 0.25·|w|²)

where:

p = 1 / (1 + exp(-w·x))

l1_ratio = 0.5 (balanced L1/L2)

L1 drives irrelevant factor coefficients to exactly zero (feature selection). L2 prevents overfitting when factors are correlated (which macro factors always are — VIX and MOVE are highly correlated, for example). The combination yields a sparse, interpretable model: the surviving non-zero coefficients are the factors that genuinely matter, and their signs indicate direction (positive coefficient = factor increase predicts higher probability of hitting +5%).

Walk-Forward Cross-Validation

Standard k-fold cross-validation is invalid for time series because it allows future data to inform past predictions. The platform uses strict walk-forward validation:

# Initial training window: 3 years (756 trading days)

# Test window: 1 quarter (63 trading days)

# Step: 1 quarter forward

Fold 1: Train 2014-01 to 2016-12, Test 2017-Q1

Fold 2: Train 2014-01 to 2017-03, Test 2017-Q2

Fold 3: Train 2014-01 to 2017-06, Test 2017-Q3

...

Each fold produces AUC, Brier score, precision, recall, and F1. The pattern across folds reveals whether the model's predictive power is stable or decaying — a common sign of regime change.

Ablation Study

After the walk-forward validation, the platform automatically runs an ablation study: systematically removing each factor and measuring the performance drop.

for factor in macro_factors:

# Remove this factor's features (level, delta_1d, delta_5d, zscore_20d)

reduced_features = all_features.drop(columns=factor_columns)

# Re-run walk-forward validation without this factor

reduced_metrics = walk_forward_cv(reduced_features, target)

# Measure degradation

auc_drop = full_model_auc - reduced_metrics['auc']

brier_increase = reduced_metrics['brier'] - full_model_brier

importance[factor] = {'auc_drop': auc_drop, 'brier_increase': brier_increase}

A large AUC drop when a factor is removed means that factor is load-bearing — the model genuinely relies on it. A near-zero drop means the factor is redundant (its information is already captured by other factors) or irrelevant. This prevents the common mistake of including VIX, VVIX, and MOVE in the same model and concluding all three matter — the ablation study reveals which one is actually doing the work.

Regime Analysis

The module detects market regimes (bull/bear based on momentum, high/low vol based on VIX quintiles) and evaluates whether the model's predictive power varies across regimes. A model that works in low-vol bull markets but fails during stress periods is useful to know about before deploying capital.

Multi-Asset Testing

1-to-N Backtesting

Run a single strategy across multiple symbols in one request (up to 10 symbols per run). The platform supports theme-based symbol selection — grouping by sector, market cap, or custom watchlists — and produces a comparative metrics table across all symbols.

This reveals whether a strategy generalises across assets or is specific to one stock's idiosyncratic dynamics. A momentum strategy that works on AAPL, MSFT, NVDA, and GOOGL but fails on XOM, JNJ, and PG is capturing tech-sector momentum, not a universal pattern.

Relative Performance

Ratio-based backtesting between two symbols. The engine constructs a price ratio series (Stock A / Stock B), applies the strategy to the ratio, and evaluates whether the strategy can time the relative outperformance:

ratio_series = stock_a['Close'] / stock_b['Close']

trades = run_strategy(ratio_series) # Entry when ratio is undervalued

This is a pairs trading framework: long the underperformer, short the outperformer, and profit from mean reversion in the ratio. The backtest tracks whether the strategy's entries coincide with ratio inflection points.

Strategy Comparison

Run up to 3 strategies simultaneously on the same symbol and date range. The platform produces overlaid equity curves, comparative metrics tables, and identifies which strategy performs best under different market conditions. This is the simplest way to answer "is my new strategy actually better than my old one, or did I just test it on a better period?"

Visual Analytics

The platform generates a suite of charts designed to expose variable-level impact on trade outcomes. The philosophy is that aggregate metrics (Sharpe, win rate) tell you what happened but not why. The visual analytics layer answers why by decomposing results across every factor the strategy uses.

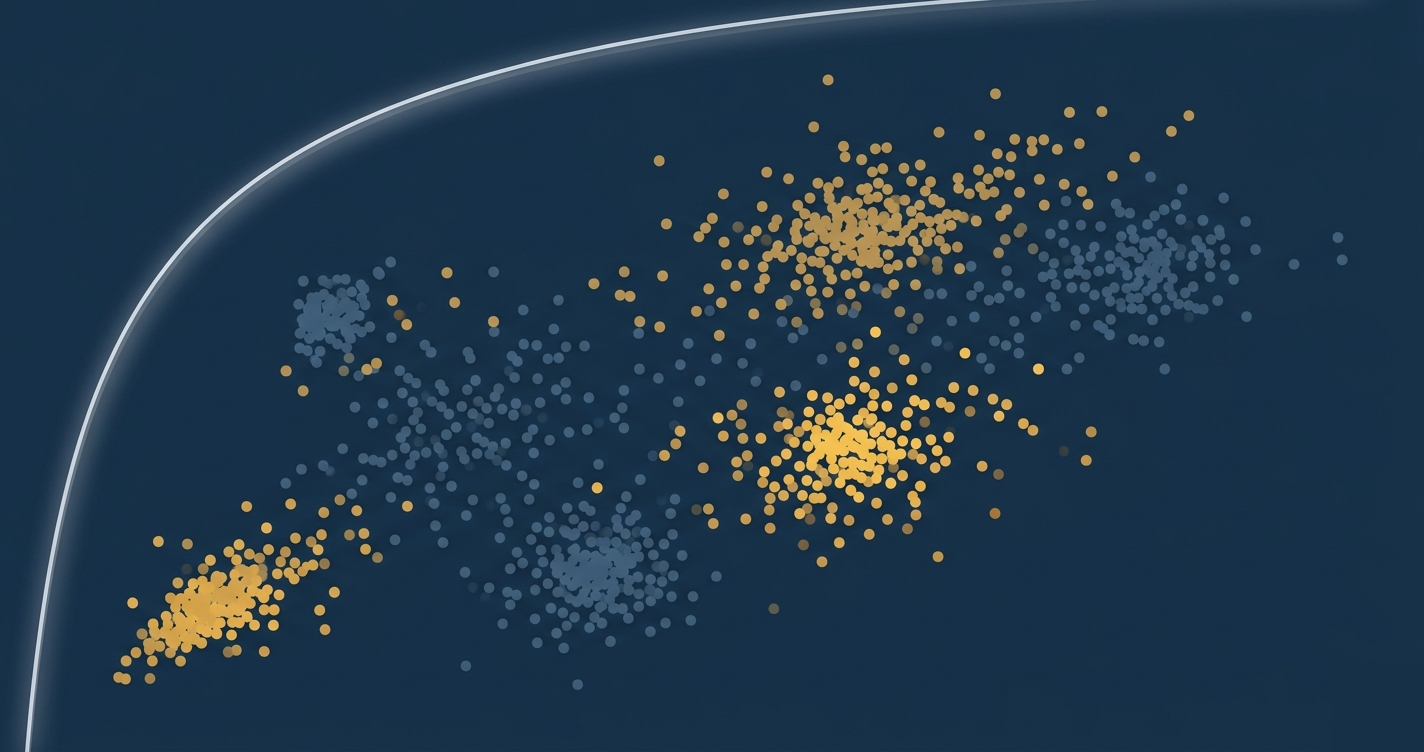

Entry Factor Scatter Plots

The most revealing visualisation in the platform. For each entry condition the strategy records, the engine produces a scatter plot with trade return on the X-axis and factor value on the Y-axis. Each point is a single trade, colour-coded by outcome — darker for winners, brighter for losers. When multiple symbols are tested via 1-to-N backtesting, each stock gets its own colour, producing a multi-symbol overlay that reveals whether factor behaviour is consistent across assets.

Seven factor dimensions are plotted simultaneously:

| Factor | What it measures | What a useful pattern looks like |

|---|---|---|

| KC/BB Ratio | Keltner Channel width / Bollinger Band width — volatility compression | Winners cluster at high ratios (above 0.8), losers spread across all values |

| RV exit - RV entry | Change in realised volatility during the trade | Winners cluster where vol decreased (entered during high vol, exited during low vol) |

| RV entry | 30-day annualised realised volatility at entry | Visible regime bands — strategies may only work in specific vol environments |

| MACD Histogram | Momentum strength at entry | Winners cluster at moderately negative values (momentum about to turn) |

| MACD Trend | Day-over-day change in MACD histogram | Winners cluster at positive trend (histogram improving) — momentum inflection |

| RSI Value | 14-period Relative Strength Index at entry | Winners concentrated in 40-60 range (not extended), losers at extremes |

| RSI Trend | Day-over-day change in RSI | Winners at positive RSI trend (strength improving from neutral zone) |

The value of these plots is in the absence of pattern as much as its presence. If winners and losers are uniformly mixed across a factor's range, that factor isn't discriminating — the strategy's entry condition for that variable isn't adding value. This is faster and more intuitive than running regressions, because a human eye immediately spots clustering, banding, and outlier-driven results that statistical summaries can mask.

ROC Curves

Each deep test produces a Receiver Operating Characteristic curve plotting the true positive rate against the false positive rate across all possible entry score thresholds. The diagonal represents a random classifier (AUC = 0.5). The strategy's curve bows toward the top-left corner in proportion to its discriminative ability.

The AUC value is displayed in the chart title. An AUC of 0.65 means the strategy's entry scoring correctly ranks a random winner above a random loser 65% of the time — modest but non-trivial. Below 0.55 suggests the entry scoring is essentially noise.

Equity and Drawdown Curves

Standard but well-executed. Multiple strategies are overlaid on a single time-axis chart for direct comparison. Drawdown charts use filled area (red, 10% opacity) beneath the drawdown line to make the severity of drawdown periods visually proportional to their depth and duration.

Both chart types support time-axis zoom, legend toggling per strategy, and hover tooltips showing exact values at each date.

Macro Factor Visualisation

The ablation study renders as a colour-coded table where rows with large AUC drops (important factors) are highlighted in red, and rows with near-zero drops (irrelevant factors) are green. This immediately surfaces which factors are load-bearing in the model.

A ranked badge display shows the top 3 most important factors in red, orange, and purple — a quick reference for which macro variables deserve monitoring attention.

Coefficient analysis splits features into positive and negative contributors, showing both the direction and magnitude of each factor's influence on the prediction. This answers the question: "if VIX rises by 1 z-score, does that make a +5% hit more or less likely, and by how much?"

Volatility Decomposition Charts

Three time-series charts for index volatility analysis:

- Index realised volatility — 30-day rolling vol with filled area, showing volatility regime changes over time

- Realised dispersion — constituent variance minus index variance, identifying periods where stock-picking has structural advantage

- Constituent volatility overlay — top 10 constituents plotted simultaneously, revealing which names drive index vol and which are idiosyncratically quiet

Performance Metrics

| Metric | Formula | What it reveals |

|---|---|---|

| CAGR | (final / initial)^(1/years) - 1 | Annualised compounding rate |

| Sharpe Ratio | (mean daily return / std) * sqrt(252) | Return per unit of total risk |

| Sortino Ratio | (mean daily return / downside std) * sqrt(252) | Return per unit of downside risk only |

| Calmar Ratio | CAGR / abs(max drawdown) | Return per unit of worst-case loss |

| Max Drawdown | min((equity - peak) / peak) | Worst peak-to-trough decline |

| VaR (95%) | 5th percentile of daily returns | Worst expected daily loss at 95% confidence |

| CVaR (95%) | mean(returns where return < VaR) | Expected loss in the worst 5% of days |

| Skewness | Third moment of returns | Positive = right tail heavier (desirable) |

| Kurtosis | Fourth moment of returns | Higher = fatter tails (more extreme events) |

VaR and CVaR are worth highlighting. Most retail backtesting tools stop at Sharpe and max drawdown. VaR tells you the daily loss you should expect 1 in 20 days. CVaR (Conditional Value at Risk, also called Expected Shortfall) tells you the average loss on those worst days — it captures tail risk that VaR alone misses. A strategy with good Sharpe but poor CVaR is one bad week away from a drawdown the Sharpe ratio didn't warn you about.

Volatility Testing

The volatility module analyses index constituent behaviour for sector indices (SMH, XLE, XLK). For each index, it calculates:

- Constituent-level realised volatility: 30-day rolling vol for each stock in the index

- Realised dispersion: Average constituent variance minus index-level variance — this measures how much the constituents are moving independently vs. together

- Regime detection: Classifying volatility environments for conditional analysis

High dispersion means individual stocks are diverging from the index — a favourable environment for stock-picking. Low dispersion means stocks are moving in lockstep — a difficult environment for any strategy that relies on idiosyncratic moves.

Limitations

Strategy execution: Strategies run via exec() with restricted globals. This is adequate for a research tool but wouldn't be appropriate for a multi-tenant production system where untrusted code execution is a security concern.

Data storage: CSV files on the local filesystem. No database, no concurrent access control. Works well for single-user research but would need a proper data layer for team use.

Position sizing: The equity curve simulation is a simple multiplicative model (equity × sell/buy ratio). There's no explicit contract sizing, margin modeling, or partial fills. The focus is on signal validation rather than execution simulation.

Macro factor model: Elastic-net logistic regression is interpretable but linear. Non-linear interactions between factors (e.g., the combination of high VIX and rising yields being predictive when neither alone is) would require tree-based models or neural networks at the cost of interpretability.

Survival analysis: Kaplan-Meier is non-parametric — it makes no assumptions about the distribution of time-to-event. This is conservative but means we can't extrapolate beyond the observed data range.

Tech Stack

| Layer | Technology |

|---|---|

| Frontend | React 19, Chart.js 4.5, chroma-js |

| Backend | FastAPI (ASGI), Python 3.x |

| Data processing | Pandas, NumPy |

| ML / Statistics | scikit-learn (Elastic-Net, metrics), SciPy, lifelines (Kaplan-Meier) |

| Market data | yfinance (optional download scripts) |

| Storage | Local CSV (OHLCV data), JSON (test results), Python files (strategies) |

| Deployment | React dev server (port 3000) + FastAPI (port 8000), CORS-enabled |

Reproducing This

The platform is not open-sourced, but the architecture is reproducible:

- Backtesting engine:

run_strategy()interface → equity curve via multiplicative compounding → standard metrics (Sharpe, Sortino, Calmar, VaR, CVaR) - Hit-rate analysis: For each entry, check if highs/lows within N-day window breach profit/loss targets

- Skill testing: Use scikit-learn's

roc_auc_scoreandbrier_score_losson strategy entry condition scores vs. trade outcomes - Randomised entry testing: Shift entries ±N days, re-simulate 1000 times, compute p-value

- Survival analysis:

lifelines.KaplanMeierFitteron time-to-target-hit data - Macro factor modeling: Elastic-net logistic regression with walk-forward CV, +1 day feature shift, automatic ablation study

- Volatility testing: 30-day rolling constituent vol, realised dispersion = avg constituent variance - index variance

The novel contribution is the four-layer validation framework. Any single test can be fooled. Hit rates can look good by chance. Skill tests can overfit. Randomised entries might miss timing-dependent edges. Survival curves can be skewed by outliers. But a strategy that passes all four has been stress-tested from independent angles — making it substantially more likely to represent a genuine, deployable edge.

Disclaimer: Altus Labs is not authorised or regulated by the Financial Conduct Authority (FCA). Altus Labs is a research publication and this content is provided for informational and educational purposes only. It does not constitute investment advice, a financial promotion, or an invitation to engage in investment activity. See our full disclaimer for more information.